Quantifying Consistency in LLM Logical Reasoning via Structural Uncertainty

Senior Applied Scientist · AWS GenAI Innovation Center

Reliable Reasoning, Calibration, and Uncertainty for AI Systems

I develop principled methods for evaluating and improving LLM reasoning, with a focus on self-evaluation, uncertainty, and mathematically grounded reliability.

Senior Applied Scientist

GenAI Innovation Center, AWS

Ph.D. Applied Mathematics, Yale

I study how AI systems reason, quantify uncertainty, and improve under limited supervision. At AWS, I develop methods that make large language models more reliable, better calibrated, and grounded in principled foundations.

My research combines mathematical rigor with practical AI systems — spanning LLM self-evaluation, Bayesian uncertainty, active learning, and information-theoretic foundations.

Before AWS, I worked at Samsung SDS Research America and Moody’s Analytics. I hold a Ph.D. in Applied Mathematics from Yale, a B.S. in Mathematics from KAIST, and was a Simons Postdoctoral Fellow at UT Austin.

My goal is to bridge mathematical rigor and real-world AI systems in ways that make advanced models more trustworthy and useful.

Measuring when LLMs can evaluate their own reasoning — logical consistency, self-correction, and verification.

Featured: Can LLMs Reliably Evaluate Themselves?Calibration, confidence, and reliability under limited supervision — Bayesian and information-theoretic approaches.

Featured: Balanced Entropy Active LearningInformation theory, entropy, Bayesian learning, and VC-style analysis — rigorous foundations for learning and inference.

Featured: Analytic Mutual Information in BNNs

I occasionally write about the principles behind reliable AI systems — reasoning, uncertainty, calibration, and the gap between benchmark performance and trustworthy deployment.

When initially disputed cases converge to unanimous wrong agreement, consensus becomes a deployment risk. Conformal prediction turns debate outputs into calibrated act-or-escalate decisions.

Read essay →Can LLMs tell when they are wrong? A VC-theoretic framework exposes a capacity-calibration tension in model self-evaluation.

Read essay →Answer agreement can mask reasoning instability. Self-preference rankings reveal failure modes that answer dispersion alone can miss.

Read essay →Also on Google Scholar.

From Debate to Decision: Conformal Social Choice for Safe Multi-Agent Deliberation

Quantifying Consistency in LLM Logical Reasoning via Structural Uncertainty

Reflect, Rewrite, Repeat: How Simple Arithmetic Enables Advanced Reasoning in Small Language Models

Can LLMs Reliably Evaluate Themselves? A Probabilistic VC Framework

Improving Instruction Following in Language Models through Proxy-Based Uncertainty Estimation

Bayesian Active Learning for Semantic Segmentation

Unsupervised Accuracy Estimation of Deep Visual Models using Domain-Adaptive Adversarial Perturbation without Source Samples

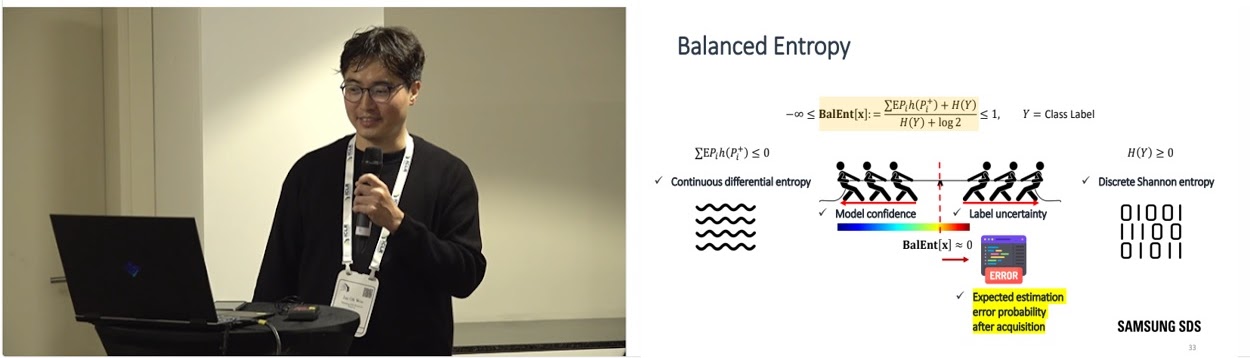

Active Learning in Bayesian Neural Networks with Balanced Entropy Learning Principle

Unsupervised Contrastive Representation Learning for 3D Mesh Segmentation

Analytic Mutual Information in Bayesian Neural Networks

Medical Image Labeling via Active Learning is 90% Effective

Verifying Measures of Quantum Entropy

Active Learning Performance in Labeling Radiology Images is 90% Effective

Highly Efficient Representation and Active Learning Framework for Imbalanced Medical Image Classification

PatchNet: Unsupervised Object Discovery Based on Patch Embedding

Majorization and Rényi Entropy Inequalities via Sperner Theory

On the Steady State of Continuous Time Stochastic Opinion Dynamics

Entropy Inequalities for Sums in Prime Cyclic Groups

An Analytical Framework for Modeling a Spatially Repulsive Cellular Network

On the Coverage Probability of a Spatially Correlated Network

On the Entropy and Mutual Information of Point Processes

A Discrete Entropy Power Inequality for Uniform Distributions

Information Theoretic Inequalities, Limit Theorems, and Universal Compression over Unknown Alphabets

A Lower Bound on the Rényi Entropy of Convolutions in the Integers

Redundancy of Exchangeable Estimators

A Note on Preconditioning by Low-Stretch Spanning Trees

Available for research collaboration, invited talks, and selected consulting on LLM reasoning, uncertainty, and evaluation.

jaeoh.woo@aya.yale.edu →